Salvatore Domenic Morgera, University of South Florida

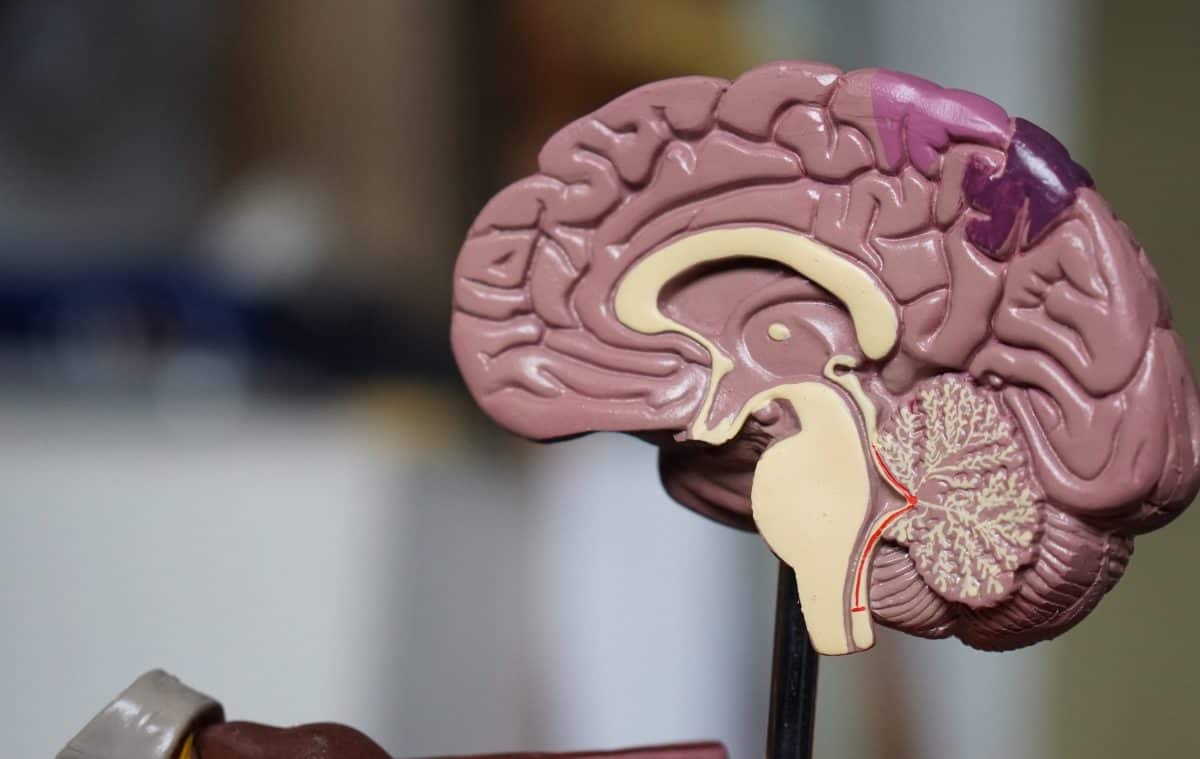

How the brain works remains a puzzle with only a few pieces in place. Of these, one big piece is actually a conjecture: that there’s a relationship between the physical structure of the brain and its functionality.

The brain’s jobs include interpreting touch, visual and sound inputs, as well as speech, reasoning, emotions, learning, fine control of movement and many others. Neuroscientists presume that it’s the brain’s anatomy – with its hundreds of billions of nerve fibers – that make all of these functions possible. The brain’s “living wires” are connected in elaborate neurological networks that give rise to human beings’ amazing abilities.

It would seem that if scientists can map the nerve fibers and their connections and record the timing of the impulses that flow through them for a higher function such as vision, they should be able to solve the question of how one sees, for instance. Researchers are getting better at mapping the brain using tractography – a technique that visually represents nerve fiber routes using 3D modeling. And they’re getting better at recording how information moves through the brain by using enhanced functional magnetic resonance imaging to measure blood flow.

But in spite of these tools, no one seems much closer to figuring out how we really see. Neuroscience has only a rudimentary understanding of how it all fits together.

To address this shortcoming, my team’s bioengineering research focuses on relationships between brain structure and function. The overall goal is to scientifically explain all the connections – both anatomical and wireless – that activate different brain regions during cognitive tasks. We’re working on complex models that better capture what scientists know of brain function.

Ultimately a clearer picture of structure and function may fine-tune the ways brain surgery attempts to correct structure and, conversely, medication tries to correct function.

Wireless hot spots in your head

Cognitive functions such as reasoning and learning use a number of distinct brain regions in a time-sequenced manner. Anatomy alone – the neurons and nerve fibers – cannot explain the excitation of these regions, concurrently or in tandem.

Some connections are actually “wireless.” These are electric near-field connections, and not the physical connections captured in tractographs.

My research team has worked for several years detailing the origins of these wireless connections and measuring their field strengths. A very simple analogy of what is going on in the brain is how a wireless router works. The internet is delivered to a router via a wired connection. The router then sends the information to your laptop using wireless connections. The overall system of information transfer works because of both wired and wireless connections.

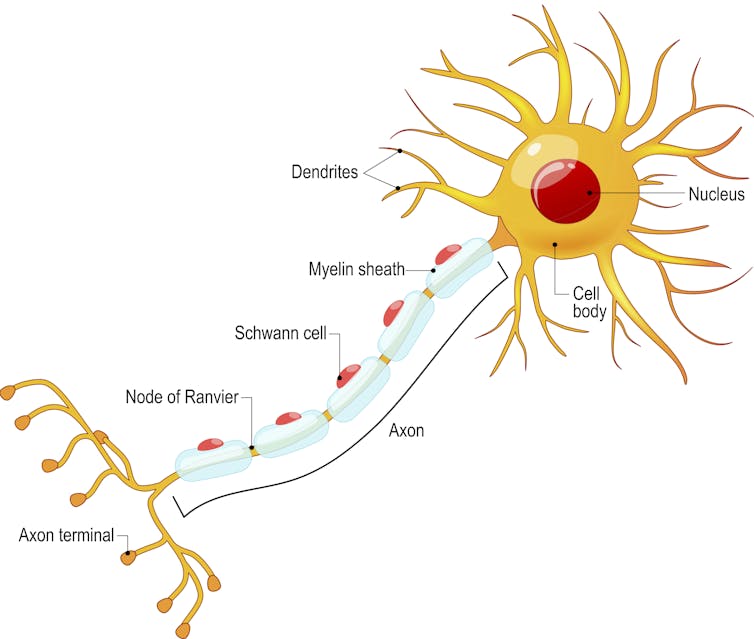

In the case of the brain, nerve cells conduct electrical impulses down long threadlike arms called axons from the cell body to other neurons. Along the way, wireless signals are naturally emitted from uninsulated portions of nerve cells. These spots that lack the protective insulation that wraps the rest of the axon are called nodes of Ranvier.

The nodes of Ranvier allow charged ions to diffuse in and out of the neuron, propagating the electrical signal down the axon. As the ions flow in and out, electric fields are generated. The intensity and structure of these fields depends on the activity of the nerve cell.

Here at the Global Center for Neurological Networks we’re focusing on how these wireless signals work in the brain to communicate information.

The brain’s nonlinear world

Investigations into how excited brain regions match up with cognitive functions make another mistake when they rely on assumptions that lead to overly simple models.

Researchers tend to model the relationship as linear with a single variable, measuring the average size of a single brain region’s response. It’s the logic behind the design of the first hearing aid – if a person’s voice grows twice as loud, the ear should respond twice as much.

But hearing aids have greatly improved over the years as researchers have come to better understand that the ear is not a linear system, and a form of nonlinear compression is needed to match the sounds generated to the listener’s capability. In fact, most living things do not have sensing systems that respond in a linear, one-to-one manner to stimuli.

Linear models assume that if the input to a system is doubled, the output of that system will also be doubled. This is not true of nonlinear models, where many output values can exist for single value of the input. And most scientists agree that neural computations are in fact nonlinear.

A crucial question in understanding the link between brain and behavior is how the brain decides the best course of action among competing alternatives. For example, the frontal cortex of the brain makes optimal choices by computing many quantities, or variables – calculating the potential payoff, the probability of success and the cost in terms of time and effort. Since the system is nonlinear, doubling the potential payoff may make a final decision much more than twice as likely.

Linear models miss out on the rich variety of possibilities that can occur in brain function, especially those beyond what anatomical structure would suggest. It’s like the difference between a 2D and 3D representation of the world around us.

Current linear models just describe the average level of excitation in a brain region, or the flow across a brain surface. That’s much less information than my colleagues and I use when building our nonlinear models from both enhanced functional magnetic resonance imaging and electric near-field bioimaging data. Our models provide a 3D image of information flow across the surfaces of the brain and to depths within it – and get us closer to representing how it all works.

Normal anatomy, physiological dysfunction

My research team is intrigued by the fact that people with totally normal-looking brain structures can still have major functional problems.

As part of our research into neurological dysfunction, we visit individuals in hospice, bereavement support groups, rehabilitation care facilities, trauma centers and acute care hospitals. We are consistently startled to realize that people who have lost loved ones can exhibit similar symptoms to those of patients diagnosed with Alzheimer’s disease.

Grief is a series of emotional, cognitive, functional and behavioral responses to death or other kinds of loss. It’s not a state, but rather a process which can either be temporary or ongoing.

The healthy-looking brains of those suffering physiological grief do not have the same anatomical problems – including shrunken brain regions and disrupted connections between networks of neurons – that are found in those of people with Alzheimer’s disease.

We believe this is just one example of how the brain’s hot spots – those connections that are not physical – plus the richness of the brain’s nonlinear operation can lead to outcomes that wouldn’t be predicted by a brain scan. There are likely many more examples.

These ideas may point the way to the mitigation of serious neurological conditions through noninvasive means. Bereavement therapy and noninvasive, electric near-field neuromodulation devices can reduce the symptoms associated with the loss of a loved one. Perhaps these protocols and procedures should be more widely offered to patients suffering from neurological dysfunction where imaging does reveal anatomical changes. It could save some of these individuals from invasive surgical procedures.

Diagramming all the brain’s nonphysical links using our recent advances in electric near-field mapping, and employing what we believe are biologically realistic many-variable nonlinear models, will get us one step closer to where we want to go. Better understanding of the brain will not only reduce the need for invasive operating procedures to correct function, but will also lead to better models for what the brain does best: computation, memory, networking and information distribution.

Salvatore Domenic Morgera, Professor of Electrical Engineering and Bioengineering, Tau Beta Pi Eminent Engineer, University of South Florida

This article is republished from The Conversation under a Creative Commons license. Read the original article.